This IDC white paper puts the evolution of data platforms into layman’s terms. My take away is that the unshackling of information architects and applications from the constraints of the traditional RDBMS will continue. Many of the design choices that the article details are grounded in the historic limitations of the data platform. The comments made under the Future Outlook segment are key:

“Trying to make definitive statements about the state of analytic-transaction data platforms going forward is challenging, because both the database kernel technology and the hardware on which it runs are evolving at a rapid pace. In addition to this, new workloads and mounting performance requirements add even more to the pace of development. It is safe to say that all the technology described in this study, admittedly in a very abstract manner, may be described as transitional technology that is evolving quickly. New approaches to data structures, new optimizations for transactional data once it is fully freed from the constraints of disk optimization, new ways of organizing processors and memory, and the introduction of non-volatile dual in-line memory modules (NVDIMMs) all will no doubt result in technologies within 10 years that are very different from what is described here.”

While platforms and technologies are evolving (this discussion has additional detail here), I find the juxtaposition of the “ideal” view presented here and the reality of most data operations interesting. This article provides “Essential Guidance” focused on IT buyers and guidance on choosing the right technology platform.

The focus on hardware and technology tends to obscure an equally important part of the buying equation – namely can managers manage these new technologies to achieve the desired business impacts and resulting business benefits. For the most part the answer is a resounding – NO. For these “next gen” implementations to work, organizations need to not only upgrade their platforms, but also their management practices. The balance of this blog entry examines some of the areas that the IDC article focuses on from the management perspective of the Chief Data Officer or Enterprise Information Architect.

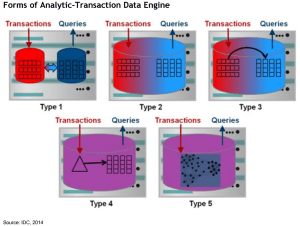

The Enterprise Data Warehouse. Traditionally the Enterprise Data Warehouse (EDW) has been considered the repository of the “single version of the truth”. However, when it comes to analytics – and melding the transactional data store with analytics, this is a hard concept. There is no one version of the truth – everything is context driven. The design alternatives presented in the article (See Figure below) enable this in that they generally store both the transactional (source) and the fully resolved EDW version. This allows users to hit both the transactional store AND the EDW depending on the context they seek and how they want to interact with the data. Implicit in this view is that the context is captured and in a machine exploitable form that enables users to derive their own “single version of the truth”. This is a function of metadata discussed below. Additionally the article recognizes that the “one large database” solution is not generally a viable alternative; the issue being one of “manageability and agility.” This is somewhat contradicted in the opening “opinion” section in that they talk about a canonical data model. However, I am going to assume that the canonical recommendation is related to the metadata and not the content.

In all of the platform options discussed in the paper (see below), data managers need to keep track of a transactional data and data within a fully resolved EDW. The context and the semantic meaning of the content of both of those data sources needs to be managed, cross walked, and communicated to the user community. This will involve an evolution in both management practices and tools.

Metadata. I like the way this paper addresses metadata:

“Metadata, including all data models and schemas in the relevant databases or data collections, must be harmonized, kept current with those databases, and mapped to higher order constructs, including a business glossary and, for data managed in common, a canonical data model, in order to facilitate the access and management of the data.”

The notion of mapping “higher order constructs” is key. While it is not always possible or feasible to create a canonical data model, it is very feasible to create a canonical metadata model (metamodel). This give you a consistent way to fully describe your data regardless of the physical form it takes, and link it to higher order constructs referred to. My article here talks to the role the enterprise plays in managing the metadata at the enterprise level.

Managing the Evolution. The architectures discussed in the paper all require an evolution from the transactional data stores that exist today towards platforms that can respond to business needs rapidly, and with little or no latency. The “Type 5” platform in Figure 1 is the “Data Lake” that has become such a buzzword. In this configuration, there is a single data structure for both transactions and analytics. The ETL functions, number of indexes, and flexibility that can be applied to render the data all place a larger burden on the governance disciplines. Additionally, the process by which the organization integrates the business and IT activities requires formalizing in a way that breaks down the traditional silos.

Hampering the evolution at some level is the fact that the tool suites are not entirely intuitive. Tools to handle the mapping of the higher order constructs (concepts systems; ontologies; taxonomies, reference data…), and the management of multiple dictionaries cannot easily be implemented without complex configuration and often coding. The tool vendors seem to be coming along, but many are still working to apply governance and curation within the context of table based systems. The reality is that to create fully described data that is linked to higher order constructs, and to manage these relationships requires a collection of tools that must be configured to address your environment. It is not yet easy.

The Way Forward. Previously I have made the comment that the Information Architect, Enterprise Data Management Office, or CDO must initially focus on creating a tangible value proposition for the business side of the house. As long as data management is perceived as a function related to standards, governance and “protocol” it will be perceived as slowing down the business and getting in the way of achieving business goals. This article details a scoped down set of goals that lay the foundation for that initial value proposition. Once the enterprise data management function is able to make the case they actually improve business operations, and impact key success metrics (i.e. revenue), what next?

This is where all the articles regarding CDO’s seem to agree. The next step is all about outreach and engagement with the broader business community – potentially internal and external to the organization. My recommendation here is to perform this activity using a framework that ensures the discussions stay focused on goals, practices, and result in actionable, measurable and prioritized recommendations. The CMMI Data Management Maturity Model (DMM) is one such framework. I am biased, admittedly as I helped create it, but for an independent opinion Bob Lambert at CapTech wrote a review that speaks volumes. The framework is used to engage in a series of workshops. These workshops serve to identify a maturity level, but more importantly identify the business priorities and concerns as detailed by the workshop participants. This is critical as the resulting recommendations inherently have buy-in from across the organization.

Because the Data Management Model evaluates capabilities at the “practice” level (i.e. what people actually do), it inherently details the next steps in terms of recommendations; in other words – do not try to create a semantically equivalent data model across the whole organization if you cannot even do it for a business unit or a project! Additionally, the model recognizes the relationships between functions. The end result is a holistic and integrated set of guidance for the overall data management strategy and implementation roadmap.

Organizations seeking to upgrade their data platforms to more closely resemble the “Analytic Transactional data platform” that enables the real-time enterprise as discussed in the IDC white paper will have greater success more quickly if they evolve their data management practices at the same time.