Sub Committee hearing on oversight of Ai here

I like this quote: “I recommend that since so many risks of AI systems come from within

relationships where people are on the bad end of an information asymmetry, lawmakers

should implement broad, non-negotiable duties of loyalty, care, and confidentiality as

part of any broad attempt to hold those who build and deploy AI systems accountable.”

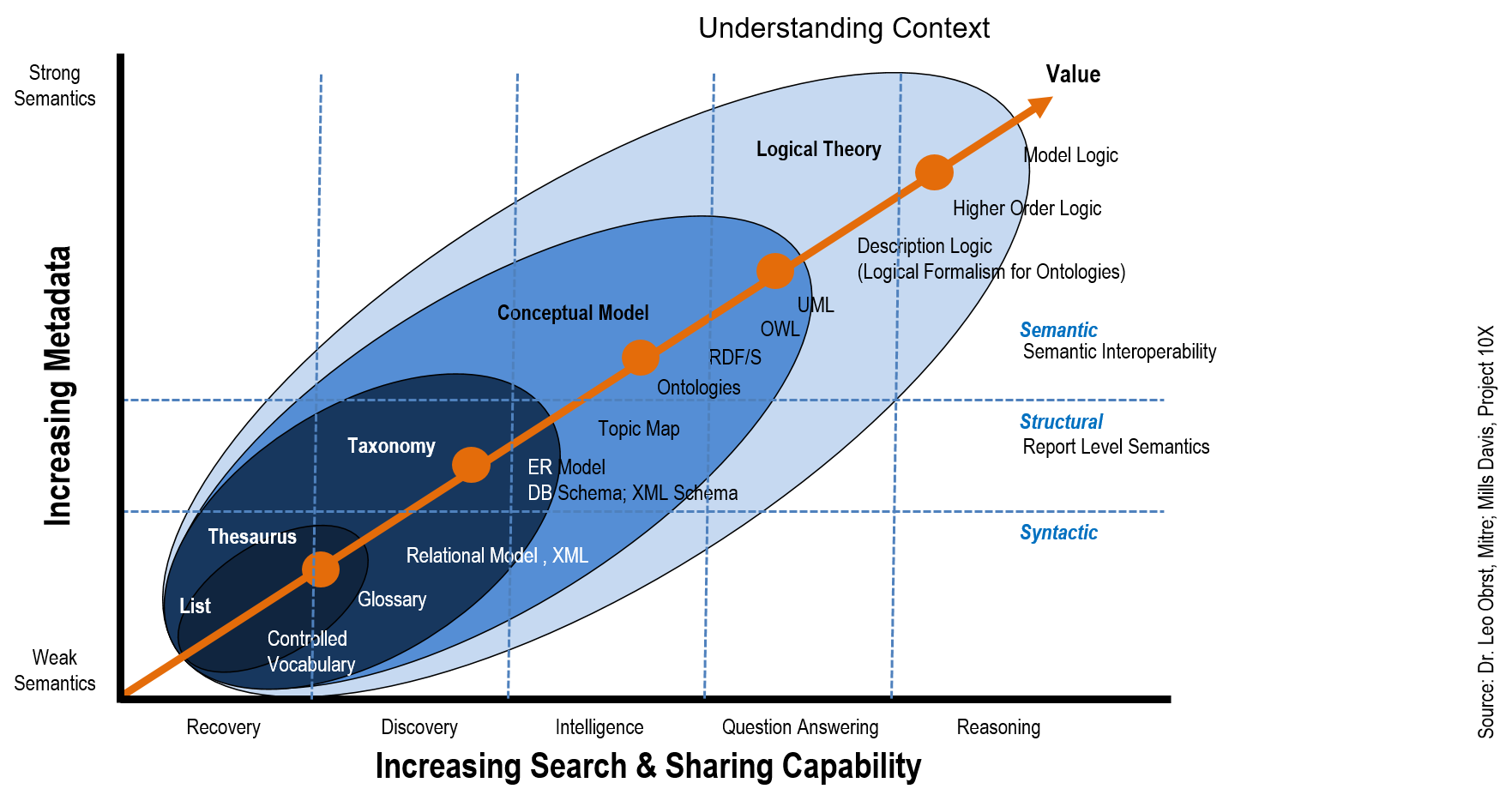

It seems to me that if one follows this logic, we end up with principle based legislation that will present challenge in building control models. It will take time for best practices to emerge. Do we end up with something that looks like GDPR but for Ai?

Blumenthal & Hawley Announce Bipartisan Framework on Artificial Intelligence Legislation

Comprehensive framework would establish an independent oversight body, allow enforcers & victims to seek legal accountability for harms, promote transparency, & protect personal data

The good thing about the way that this is written up is that many of the data and PII best practices already on the books are captured – i.e. transparency and how children’s data is managed are the two that caught my eye.

SB-362 Data broker registration: accessible deletion mechanism.(2023-2024)

Much wailing and gnashing of teeth here. This is one of those things that in principle sounds great, but in practice will be complex – maybe in this day and age that applies to all privacy data management. My biggest issue surrounds what organizations do until this all gets sorted out – what does “good” look like from the regulator perspective?

This is summed up in the following from Alex LaCasse at the IAPP “”From a purely practical perspective, in a relatively short time period, there are now many varying privacy laws that require companies to quickly and wholly change their operations and technical infrastructure, let alone their business practices that are reliant on data,” Kelley Drye & Warren Partner Alysa Hutnik, CIPP/US, said. “In the meantime, companies are devoting millions to revamp their operations to comply with these laws in good faith, knowing that realistically their interpretation of these laws may be off, and many more millions of dollars will need to be spent to course-correct based on future regulations and regulatory guidance.”

I am reminded of a comment a lawyer friend made back in 2017 when GDPR was all the rage: “if you wait until the details are sorted out in court, then you will not have wasted millions – far cheaper to pay me $60k to defend this position than to do a system upgrade and have to re do it every time legal opinions are released” (and yes he said $60k which sound too low to me!)

Form a practical perspective, I keep coming back to the core privacy principles – which basically align to GDPR and CCPA Rights and Obligations. We need to be able to execute on those rights at some level, and get those foundations in place, and be in a position to fine tune when the details emerge.

Core Principles:

- Lawfulness, fairness and transparency

- Purpose limitation

- Data minimization

- Accuracy

- Storage limitation

- Integrity and confidentiality (security)

- Accountability